Last week, I did something that made me uncomfortable.

I took a solid assignment I've used before.

I copied the prompt into ChatGPT.

And it gave me a polished, "teacher-pleasing" response in under a minute.

No hesitation.

No struggle.

No thinking journey.

Just performance.

If that's happening to you too, I want you to hear this clearly:

This isn't a "kids these days" problem.

And it's not an "academic honesty" problem first.

It's an assignment design problem.

AI didn't ruin learning.

It exposed how much of what we called learning was actually compliance.

And the good news is, we already have a framework that helps.

It's 70 years old.

And it still works.

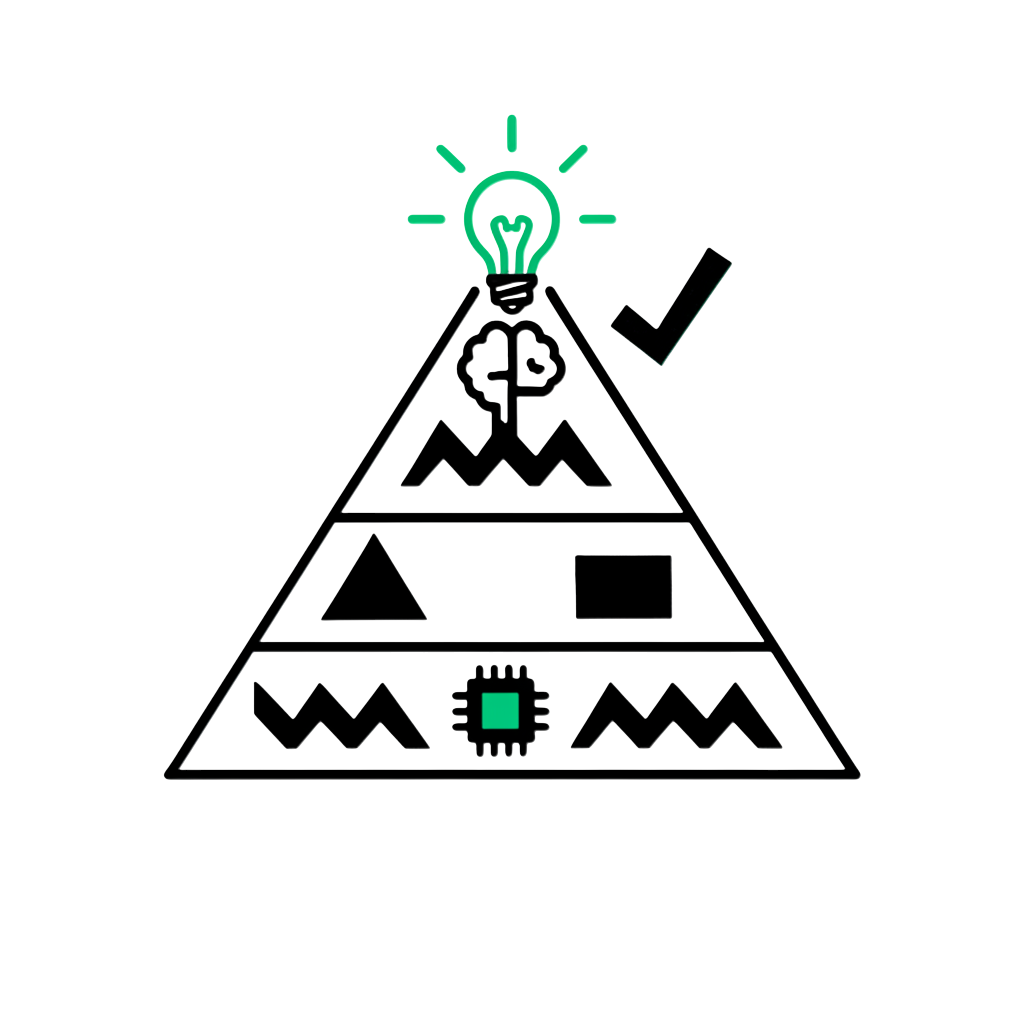

It's Bloom's Taxonomy.

The uncomfortable reality: your best assignment might now be a template

If your task is:

- "Explain…"

- "Summarise…"

- "Write an essay on…"

- "List the causes of…"

Then you're asking students to do what AI does best: generate fluent language on demand.

AI is shockingly strong at recall and explanation.

That doesn't mean your subject is obsolete.

It means the assessment format is.

The moment we accept that, we stop playing whack-a-mole with detection tools.

And we start designing work that makes thinking visible.

Bloom's is not obsolete. It's the map

Bloom's taxonomy (originally 1956; revised later into verbs) moves from lower to higher cognitive demand:

Remember → Understand → Apply → Analyze → Evaluate → Create.

Here's the shift I'm asking you to make:

Stop using Bloom's as a ladder students climb only through content.

Start using Bloom's as a defence system for assessment.

Because as you move up the taxonomy, AI becomes easier to consult… but harder to replace the learner.

Why?

Because higher levels require things AI doesn't truly have:

- Context

- Stakeholders

- Lived experience

- Ethical accountability

- Iteration based on real feedback

- A defensible personal voice

What I see in schools right now

When I work with international school teams, I notice a predictable pattern.

Teachers start with:

"Let's ban it."

Then they try:

"Let's detect it."

And eventually they admit what nobody wants to say out loud:

The detection conversation is exhausting.

It also damages trust.

And it still doesn't solve the underlying issue—because students can prompt, paraphrase, and humanise AI output quickly.

So the staffroom mood becomes:

"How do I prove it's AI?"

Instead of:

"How do I design learning that can't be outsourced?"

That's the pivot.

The practical redesign: "The New Bloom's" approach

This is the core idea:

AI capability drops as you move up Bloom's.

So your assignment design should exploit that gradient.

I'm going to translate that into a teacher workflow + leader workflow you can use tomorrow.

Teacher workflow: turn any task into an AI-resistant task in 15 minutes

Here's my 4-step system.

I use it personally when I'm redesigning assessments with teachers.

Step 1: Run the "AI test" (60 seconds)

Paste your assignment prompt into ChatGPT.

If you get something submission-ready quickly, don't panic.

Just label it:

"This task currently lives in the AI-easy zone."

Step 2: Identify the Bloom level you're actually assessing

A lot of tasks look like "Analyze" but are really "Understand + Write nicely."

Be brutally honest.

If students can succeed by summarising Wikipedia-level knowledge fluently, that's lower Bloom.

Step 3: Add 3 "human anchors"

Choose any three of these:

- Personal context

- Novel local constraints (your school, your community, your classroom)

- Multiple stakeholder perspectives

- Metacognitive reflection (how they thought, not just what they concluded)

- Ambiguity (competing values, trade-offs)

- Iteration (draft → feedback → revision)

- Oral defence (short conference)

- Process visibility (planning log, evidence trail)

- Physical evidence (photos, measurements, observations)

This checklist is the simplest AI-resistance tool I've seen for teachers because it's concrete and scorable.

Step 4: Re-test the prompt with AI

If AI can still "complete" it perfectly, add one more anchor.

Usually, iteration + oral defence changes everything.

Classroom examples (that don't require heroic effort)

Remember/Understand → Make it observable

Instead of: "Define photosynthesis."

Try: Observe a plant in your home (or school) for one week.

Take 3 photos across the week.

Annotate the photos to explain what you think is happening and why.

Then write a short explanation connecting your observations to photosynthesis.

This works because AI can explain photosynthesis, but it can't fake your plant and your evidence without you noticing.

Apply → Make it local and constraint-based

Instead of: "Calculate area."

Try: Redesign your classroom layout for collaboration.

Measure the actual space.

Decide table sizes.

Calculate spacing.

Justify the layout based on movement, access needs, and learning purpose.

Again, the "AI-resistant" move is not harder math.

It's grounding the math in a real constraint AI doesn't have access to.

Analyze → Make perspective and process visible

Instead of: "Analyze the causes of WWI."

Try: Analyze from three stakeholder viewpoints.

Then add the killer line:

"Explain how your own background influenced which perspective you found most compelling."

That metacognitive layer is what most AI-generated answers cannot authentically defend under questioning.

Evaluate → Force trade-offs, not "best answer"

Instead of: "Which energy source is best?"

Try: You have budget for one project.

Option A helps fewer people now.

Option B helps more people later.

Defend a recommendation knowing half the community will disagree.

This is where values collide.

And that's exactly where humans need practice.

Create → Require iteration + real feedback

Instead of: "Write a persuasive essay."

Try: Design a campaign to change one behaviour in your school.

Survey at least 10 students.

Create 3 artefacts (poster, script, social media plan).

Then write a reflection showing what changed after peer feedback.

AI can draft a campaign.

But it cannot run your survey.

It cannot experience your peer feedback.

And it cannot "own" the iteration unless you design for it.

"This sounds like more work"

You might be thinking:

"I already have too much to plan and grade."

Fair.

And here's my pushback:

The workload doesn't come from better tasks.

It comes from vague tasks.

When the assignment is generic, you end up grading style.

You end up policing authenticity.

You end up in endless conversations.

When the task is anchored in evidence, process, and local context, the marking becomes cleaner.

You're assessing thinking moves.

Not guessing who used AI.

Also, you don't need to redesign everything.

Start with one assessment per unit.

That's enough to shift culture.

Leader workflow: how to scale this across a department (without forcing compliance)

If you're a coach, HOD, or curriculum leader, your job is not to write everyone's assessments.

Your job is to create the conditions where good assessment design becomes normal.

Here's the team system I recommend.

1) Create a shared "AI use policy" that is specific, not moralistic

"You may use AI for…"

"You may NOT use AI for…"

"Required: attach an appendix showing prompts, tools, and verification."

This is powerful because it teaches AI literacy.

Not AI avoidance.

It also reduces the grey area that creates conflict.

2) Standardise a one-page assignment template with the checklist built in

Use the checklist as a design rubric:

- Personal context

- Multiple perspectives

- Metacognition

- Iteration

- Oral defence

- Process evidence

If your school uses common planning templates, embed this into the workflow.

Don't make it "another initiative."

Make it "how we plan here."

3) Build moderation around "evidence of thinking," not "signs of cheating"

When teams moderate student work, ask:

- Where is the thinking visible?

- Where is the student's decision-making?

- Where did the work change after feedback?

If your moderation meetings focus on these questions, the culture shifts fast.

4) Train students to use AI like a tool, not a ghostwriter

In practice, that means modelling prompts like:

- "Give me three counterarguments to my claim."

- "Identify assumptions in my reasoning."

- "Suggest questions I should ask in my interview."

Then requiring students to show:

- What they asked

- What they accepted

- What they rejected

- Why

Research support: questioning and reflection matter (especially with AI)

A 2024 study in Education 3–13 examined a Year 5 class in an IB PYP school in Hong Kong (25 students) using ChatGPT within a Bloom's-taxonomy-guided model focused on questioning and reflection.

The researchers found that Creating and Evaluating were dominant in students' questioning/answering processes, while Applying was notably low, suggesting students struggled to transfer what they did with AI into other learning areas.

That finding matters for international schools.

Because it tells us:

If we don't explicitly design for transfer, reflection, and application, students will use AI as a one-off answer machine.

Not as a thinking partner.

What "AI-proof" actually means (and what it doesn't)

AI-proof does not mean "AI can't help."

AI can help with brainstorming, summarising, drafting, translating, and generating options.

AI-proof means the core cognitive work cannot be completed without the learner's presence—without their choices, their evidence, their defence, and their iteration.

That's the bar.

And Bloom's gives us a clean language for designing toward it.

Getting started

Pick one assignment you'll use in the next 2–4 weeks.

Run the AI test.

Then rewrite it by adding three anchors:

- One personal/local anchor

- One process anchor (reflection, log, or oral defence)

- One iteration anchor (draft → feedback → revision)

Then re-test it with AI.

If AI still "wins," add one more constraint.

Do this once.

And you'll feel the shift immediately.