You Don't Have a Tool Problem. You Have a Selection Problem.

A new AI tool drops every Tuesday.

Your colleague swears by one. A Twitter thread hypes another. An admin sends a newsletter about a third.

By Friday, you've bookmarked nine tools, tried zero, and the stack of ungraded papers hasn't changed.

This isn't laziness. This is decision paralysis — and it's the most common failure mode I see in schools trying to adopt AI.

Here's the uncomfortable truth: most AI tool lists make this worse. They give you 50 options without a single reason to choose one over another.

This article does the opposite.

We'll walk through nine categories of AI tools — informed by a 2026 educator typology (Bower, Torrington & Lai surveyed 211 educators across nine countries, giving us a useful starting taxonomy), cross-referenced with multiple meta-analyses covering hundreds of studies, and tested across 2,000+ hours of hands-on educator training.

The Bower study is a reasonable starting point — but a sample of 211 is just that: a starting point. What follows goes far beyond it.

For each category, I'll tell you:

- What it actually does well (February 2026 current)

- Where it fails or misleads

- What specific tool to try first — and what it costs

- What the research says about learning impact

No hype. No affiliate links. Just honest assessments from someone who trains educators and sees what sticks.

Why This Map Matters Now

Before we get into categories, let's ground this in data — from multiple sources, not just one.

92% of university students now use AI in some form — up from 66% one year earlier. 88% use it specifically for assessments (HEPI/Kortext, 2025, N=1,041).

Meanwhile, 53% of teachers use AI for school — a 15+ percentage point jump in under two years. But over 80% of students say their teachers never taught them how to use it (RAND, 2025, N=8,601 teachers + 1,333 students).

The learning outcomes data is compelling. A meta-analysis of 34 experimental studies found GenAI has a statistically significant positive impact on learning — effect sizes of 0.795 in the cognitive dimension and 0.711 for competency (Ma et al., 2025). A separate review of 68 studies found a moderate positive effect (SMD = 0.45) across 337 effect sizes. A Harvard RCT found students using a well-designed AI tutor learned more than twice as much in less time compared to high-quality active learning (Kestin et al., N=194).

But here's the finding that should keep every educator awake:

The Brookings Global Task Force on AI in Education — after consulting education leaders, technologists, teachers, families, and students across 50+ countries — concluded in January 2026: "Under current conditions, the risks of AI use in education outweigh the benefits."

Not because AI doesn't work. Because most implementations lack pedagogical guardrails.

The OECD Digital Education Outlook 2026 puts it precisely: GenAI supports learning when guided by clear teaching principles. Without pedagogical guidance, outsourcing tasks to GenAI enhances performance with no real learning gains.

Performance is not the same thing as learning. AI makes outputs look better. That doesn't mean understanding happened.

Which is why you need a map before you start walking — and why every recommendation in this guide comes with the pedagogical context, not just the tool name.

Category 1: General-Purpose LLMs

What they are: ChatGPT, Claude, Gemini, DeepSeek, Perplexity, Microsoft Copilot

What's changed: The landscape has shifted dramatically in early 2026. The latest ChatGPT models support 400K+ token context windows. Claude handles 1 million tokens — you can upload an entire textbook and ask questions about it. Gemini is now natively integrated into Google Slides, Docs, and Classroom, which matters enormously for the 170+ million students and educators already on Google Workspace. DeepSeek, trained at a fraction of the cost of competing models, delivers competitive quality at dramatically lower output cost — relevant for budget-constrained schools. And Microsoft Copilot now includes a dedicated Teach Module with lesson planning, differentiation, and standards alignment from 35+ countries — free for many education tenants.

What they're good for: Drafting, summarising, brainstorming, differentiation, feedback generation, explaining concepts at different reading levels.

Where they fail: Factual accuracy. Every general LLM will occasionally fabricate citations, invent statistics, or present false information with absolute confidence. NIST calls this "confabulation" and lists it as one of twelve documented GenAI risk categories.

What the research says: A study of 150 participants found that unguided AI use fosters cognitive offloading without improving reasoning quality — but structured prompting significantly reduces offloading and enhances both critical reasoning and reflective engagement (SBS Swiss Business School, 2025). The gap between mediocre AI output and transformative AI output is almost entirely about prompt quality — which is a pedagogical skill, not a technical one.

Try this week: Open ChatGPT, Claude, or Gemini. Paste a paragraph from your next lesson's reading material. Ask: "Rewrite this at three reading levels: Year 5, Year 8, and Year 11. Keep the core concepts identical." Time yourself. It takes under 90 seconds. That's differentiation that used to take 30 minutes.

Verdict: Essential. But only with a verification habit and structured prompting. Never publish LLM output to students without a human check.

Category 2: Image Creation Tools

What they are: Google Nano Banana Pro, ChatGPT (GPT Image), Canva AI, Adobe Firefly, Ideogram, Midjourney, Stable Diffusion, FLUX

The biggest story in this category: Google's Nano Banana Pro — built on Gemini 3 Pro — has fundamentally changed what's possible. It generates images up to 4K resolution with near-perfect text rendering (legible labels, multi-language text in images), and it's integrated directly into Google Slides, Vids, NotebookLM, and AI Studio. For educators in the Google ecosystem, this means image generation is no longer a separate tool — it's built into the workflow. Free tier: 2 images/day. Google AI Plus ($19.99/mo): ~100 images/day.

Meanwhile, OpenAI's GPT Image replaced DALL-E entirely. It's native to ChatGPT, handles complex multi-object scenes, and works through conversational editing. Available via ChatGPT Plus ($20/mo).

What they're good for: Custom visual aids, culturally representative illustrations, historical scenes no stock library contains, student creative projects.

Where they fail: Scientific accuracy. Ask for "a diagram of the water cycle" and you'll get something that looks scientific but may label things incorrectly. AI image generators are artists, not scientists.

The deeper opportunity: Image creation teaches visual literacy. When students craft prompts to produce meaningful images, they practise precision in language, iterative thinking, and aesthetic judgment simultaneously. Three skills for the price of one tool.

What's free for educators:

| Tool | Free Tier | Best For |

|---|---|---|

| Canva AI | Completely free for K-12 (Canva for Education) | Most accessible, already in classroom workflows |

| Ideogram | Free tier available | Best text-in-image (worksheets, infographics, signs) |

| Google Nano Banana | 2 images/day (watermarked) | Google Workspace integration |

| GPT Image | Via ChatGPT Plus ($20/mo) | Conversational editing, complex scenes |

| Adobe Firefly | Limited free plan | Commercially safe, Creative Cloud integration |

| Midjourney | No free tier | Highest aesthetic quality (art/design courses only) |

Try this week: Use Canva's AI image generator (free for teachers) to create a visual metaphor for a concept you're teaching next week. Then have students critique it: What does this image get right? What's misleading? How would you improve the prompt?

Verdict: Massively upgraded. Text rendering is solved, image generation is native to LLMs, and Canva for Education makes the best tool free. Most educators still use image AI for decoration. The real power is using them as thinking tools.

Category 3: Audio and Music Generation

What they are: Suno, ElevenLabs, Udio, NotebookLM Audio Overviews, Edodo Piano, Edodo Drum Beats, Edodo Pitch Checker

What's new: Suno now generates songs up to 8 minutes with intelligent composition awareness (understands verse-chorus dynamics) and a built-in Studio DAW. Free tier gives ~50 credits/day (~10 songs). ElevenLabs' latest TTS works in 70+ languages with emotional range. But the most surprising tool in this category might be NotebookLM's Audio Overviews — upload any source document and two AI "hosts" create a podcast-style discussion in 80+ languages. The Interactive Mode lets students "raise their hand" to interrupt the AI hosts and ask questions.

Where most educators miss the point: This is the most underutilised category in formal education — and one of the most powerful for engagement and accessibility.

Research on embodied cognition confirms that learning tied to rhythm and melody activates deeper encoding pathways. A history teacher who has students compose a song about the French Revolution using Suno is doing something qualitatively different — and potentially more memorable — than assigning a research essay.

For hands-on music exploration without any login or AI overhead, classroom-ready tools like Edodo's Piano, Drum Beats, and Pitch Checker let students experiment with melody, rhythm, and tuning directly in the browser — free, instant, no accounts required.

Try this week: Take a key vocabulary list from your subject. Paste it into Suno with the instruction: "Create a short educational song that teaches these 8 terms and their definitions. Style: upbeat pop, suitable for 12-year-olds." Play it at the start of next week's lesson.

Verdict: High engagement, low adoption. Most teachers don't know these tools exist. That's an opportunity.

Category 4: Video Generation

What they are: Sora, Google Veo, HeyGen, Runway, Kling AI

What's changed: OpenAI's Sora requires ChatGPT Plus ($20/mo) or Pro ($200/mo) — the free tier was removed. Google's Veo is now generally available via API and Google AI Studio, generating short clips with native audio and upscaling to 4K. Reviewers call it the "best all-rounder" for AI video. HeyGen supports 175+ languages with natural lip sync — imagine translating a teacher's video into every student's home language.

The pedagogical shift: Students become producers, not consumers. A geography student generates a visualised explanation of tectonic plates. A language student creates a short film in their target language. The shift from watching to creating is one of the most research-supported moves an educator can make.

Where they fail: Quality is inconsistent and improving fast. Sora and Veo produce impressive short clips but hallucinate visual details. Production time is still significant for polished output.

Try this week: Use Veo via Google AI Studio (free tier available) or Clipchamp (free with Microsoft) to have students create a 60-second explainer video on a topic they're studying. Constraint: they must write the script themselves before the AI generates visuals. The constraint is the learning.

Verdict: Maturing rapidly. Veo and HeyGen are the standouts. Best used as a student creation tool with clear constraints, not as a replacement for teacher-made content.

Category 5: Presentation Tools

What they are: Gamma, Curipod, Beautiful.AI, Google Slides + Gemini, Canva, Designer in PowerPoint

What's new: Gamma introduced Gamma Agent — an AI design partner that researches the web, refines content, and restyles decks through natural language conversation. It generates presentations, documents, websites, and social posts from one platform. Google Slides now has Gemini built in (launched January 2026) — generate images, create slides, and write content directly inside Slides. Zero switching cost for schools already on Google Workspace.

The honest assessment: This remains the biggest time-saver in the entire typology. A teacher who spends 3 hours weekly building slide decks can redirect that time to student feedback, relationship-building, or professional learning.

Curipod deserves special mention — it's purpose-built for classrooms with interactive polls, quizzes, word clouds, and drawings built into every lesson. Its AI Reports show what the class understood, what needs work, and which students need extra help. That formative assessment integration is what separates it from generic presentation tools.

For real-time classroom interaction, Edodo's Word Cloud and Quiz Game are purpose-built for live collaborative engagement — students join instantly via code, no accounts required, with AI-first authoring for teachers.

The trap: AI-generated presentations are generic by default. They look professional but lack the specific classroom context, local examples, and targeted questions that make a lesson land. Always customise.

Try this week: Take the learning objectives for one lesson this week. Paste them into Gamma with: "Create a 10-slide presentation for Year 9 students. Include one discussion question per slide and one real-world example." Then spend 15 minutes customising it. Compare the total time to your usual process.

Verdict: High impact, low risk. Start here if you've never used AI for teaching. Google Slides + Gemini is the zero-friction entry point for Google schools; Gamma is the power tool.

Category 6: Research and Study Tools

What they are: NotebookLM, Perplexity, Elicit, Research Rabbit, Humata

The standout: NotebookLM has become the most versatile tool in this category. Upload sources (PDFs, articles, YouTube videos, websites) and get AI-powered analysis, study guides, and Audio Overviews — podcast-style discussions in 80+ languages with an Interactive Mode where students can interrupt to ask questions. It now also generates Video Overviews — narrated slide decks from your sources. Free tier available.

Perplexity is the strongest option for teaching research literacy — every response includes inline citations linked to original sources. Students can see exactly where each claim comes from and verify it. That built-in source transparency is pedagogically powerful.

The critical distinction: Using AI to accelerate comprehension versus using it to bypass comprehension. A student who uploads a paper to NotebookLM and asks "What are the three main arguments?" is accelerating. A student who asks "Write me a summary I can submit" is bypassing. Same tool, opposite outcomes. The difference is the task design you set.

Try this week: Upload a research article relevant to your subject into NotebookLM. Generate the Audio Overview. Listen to it. Then ask yourself: Would I assign this to students? What questions would I pair with it to ensure they still think critically?

Verdict: Transformative when paired with critical thinking tasks. Dangerous when used as a shortcut. NotebookLM and Perplexity are the two most useful tools here.

Category 7: AI Tutoring Tools

What they are: Khanmigo, SchoolAI, Flint K12, Cogniti, MathGPT, Class Companion

The highest-evidence category. This is where the research is strongest and most consistent.

A Harvard RCT found AI tutoring produced more than double the learning gains compared to high-quality in-class active learning (Kestin et al., 2025, N=194). A Brookings review of four RCTs found learning gains across all studies, with AI tutoring equivalent to 1.5 to 2 years of schooling gains in some contexts. A systematic review of ITS in K-12 (npj Science of Learning, 2025) found generally positive effects, with robust results in quantitative domains.

Key updates:

- Khanmigo is now free for all U.S. K-12 teachers through a Microsoft partnership. It has a custom built-in calculator and significantly improved error detection. It refuses to give answers — it guides students to reason their way forward.

- SchoolAI is always free for teachers — 1 million classrooms across 80+ countries. Its "Spaces" feature creates programmable AI chat rooms where teachers define the learning objectives and the AI stays within those boundaries. Featured by OpenAI as a model education platform.

- Flint K12 raised $15M to build personalised learning with AI whiteboards, enhanced analytics, and drag-and-drop activity builders.

The critical nuance: Harvard's Graduate School of Education puts it bluntly: AI can add to learning — or subtract from it, depending on how it's used. The Brookings review calls the optimal model "human-AI hybrid vigor" — teachers monitoring and guiding AI tutor use, not AI replacing teachers.

Try this week: Sign up for Khanmigo (free for U.S. teachers) or SchoolAI (free globally). Assign one problem set. Observe: do students engage with the Socratic questioning, or do they try to game the system for answers?

Verdict: The highest-evidence category. But the tool's design matters less than the teacher's task design.

Category 8: Custom Education Tools

What they are: MagicSchool AI, Diffit, Brisk Teaching, EduAide, SchoolAI, Edodo Forms, Edodo Kanban Board, Edodo Quiz Game, Edodo Word Cloud

Why these are different from general LLMs: They're opinionated. They're built around educational best practices and curriculum frameworks. That means less prompting, less verification, and less cognitive load on the teacher.

Key updates:

- MagicSchool AI now serves 5 million+ educators with 80+ teacher-specific workflows. Its new "Knowledge" feature lets districts upload curriculum documents once — that guidance then applies across all tools automatically.

- Diffit generates differentiated materials from any text, video, or website. It auto-generates vocabulary lists, multiple-choice, short-answer, and open-ended questions — all exportable to Google Docs, Slides, Forms, and Classroom. Free version available.

- Brisk Teaching works directly inside Google Docs, Forms, PDFs, Slides, and YouTube — no platform switching. 1 million+ teachers adopted. Its new Curriculum Intelligence feature automatically aligns with a school's standards, pacing, and instructional materials. 20+ features free.

Research consistently shows that teachers who adopt education-specific tools report higher satisfaction and better outcomes than those using general-purpose LLMs — primarily because the cognitive load of adapting the tool is dramatically reduced.

For classroom interaction and formative assessment, purpose-built tools like Edodo's Forms (create and share surveys), Kanban Board (collaborative project boards with 10 classroom templates), Quiz Game (real-time interactive quizzes), and Word Cloud (live collaborative brainstorming) offer AI-first authoring for teachers with instant, no-login access for students. These are tools designed around the same principles discussed in this article: teachers author with AI assistance, students interact without friction.

Try this week: Go to Diffit (free). Paste any article or text passage. Select the reading level of your lowest-level reader. Generate the adapted version. Compare it to the original. Ask yourself: How long would this have taken me manually?

Verdict: The most practical category for overwhelmed teachers. Brisk and Diffit for differentiation. MagicSchool for breadth. Edodo tools for live classroom interaction. Start here alongside presentation tools.

Category 9: Emerging and Unconventional Tools

What they are: AI Agents, GitHub Copilot, AI Grading tools, Edodo Learning Experiences, Edodo Learning Paths, Edodo Courses

The shift from tools to agents: 2026 marks the year AI moves from "tool you prompt" to "agent that acts." Autonomous tutoring systems engage in Socratic dialogue, scaffold concepts, and generate infinite practice problems based on real-time performance — monitoring student progress over entire courses and adapting without human prompting. The OECD calls for moving from off-the-shelf chatbots toward purpose-built educational AI designed with teachers.

GitHub Copilot is free for verified students and teachers (included in the Student Developer Pack). Research shows students using Copilot completed tasks 35% faster and made 50% more progress — but also report not understanding how suggestions work. The pedagogical challenge: using AI coding assistants to accelerate learning, not bypass it. Sound familiar?

AI Grading and Feedback tools — CoGrader, Gradescope (owned by Turnitin), EssayGrader — are approaching viability. A meta-analysis of 41 studies involving 4,813 students found no statistically significant differences between AI-generated and human-provided feedback on learning outcomes. Over 60% of educators are expected to adopt AI grading by 2026. All current tools are designed to assist, not replace, teacher judgment.

For structured learning at scale, platforms like Edodo's Learning Experiences (interactive coding playgrounds with AI auto-fix), Learning Paths (curated sequences of experiences), and Courses (full LMS with quizzes, certificates, and activity tracking) represent the direction: AI-assisted authoring for educators, structured engagement for learners.

Verdict: Watch this space closely. Agents, coding assistants, and AI grading are the three emerging categories that will reshape education over the next 12 months. But don't build your curriculum around them yet — they're changing too fast.

The Risk Nobody Talks About Loudly Enough

The research on AI in education is positive — when implementation is guided.

But there is one consistent red flag across every major study: cognitive offloading.

A MIT Media Lab study used EEG to measure brain activity during essay writing. Participants who relied heavily on ChatGPT showed measurably weaker neural connectivity — weaker than search-engine users and no-tool users. When those same participants later wrote without AI, they showed reduced cognitive engagement. The researchers coined the term "cognitive debt" — AI spares mental effort short-term but generates long-term costs: diminished critical thinking, reduced creativity, shallow processing (N=54; note: not yet peer-reviewed).

This finding is now corroborated by multiple sources:

- A Frontiers in Psychology study (2025) describes AI's "cognitive paradox" — it can be both a cognitive amplifier and inhibitor. Over-reliance reduces the germane cognitive load needed for deep learning.

- A study on preservice teachers (Nature Scientific Reports) found GenAI boosted learning for those who used it for deep conversations and explanations, but hampered learning for those who sought direct answers.

- The Brookings Global Task Force warns of a "doom loop of AI dependence" — students offload thinking, leading to cognitive decline, leading to more offloading.

Cognitive offloading — using AI as a substitute for thinking rather than a scaffold for deeper thinking — is the defining pedagogical risk of our era.

The most dangerous misuse of AI isn't academic dishonesty.

It's the quiet erosion of a student's capacity to grapple with complexity, tolerate ambiguity, and generate original thought — because AI always did it for them.

This is why the question is never "which AI tool?"

It's always "which AI tool, for which purpose, with which pedagogical guardrails?"

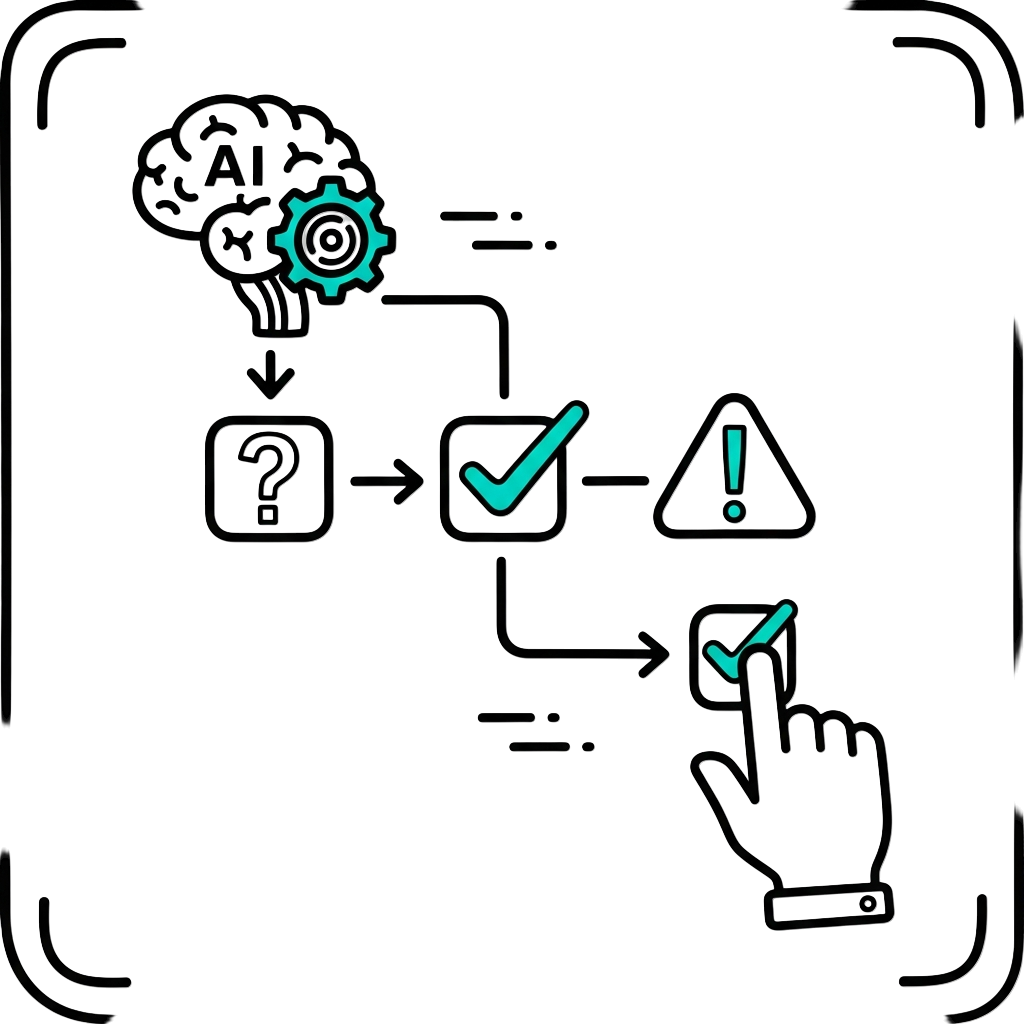

The 5-Question Decision Framework

Before adopting any tool from any category, run it through these five questions:

1. What's the learning outcome?

Never start with the tool. Start with what you want students to know, do, or understand. The tool is a vehicle, not the destination.

2. Does this tool build thinking or bypass it?

Every tool sits on a spectrum between cognitive scaffold (supports thinking) and cognitive crutch (replaces thinking). Where your tool sits should be a conscious, deliberate choice. The SBS structured prompting study found that this single design choice — structured vs unstructured AI use — determines whether students offload or engage.

3. Is it aligned with how your students actually learn?

A tool that's brilliant for discovery learning may be terrible for procedural fluency. A tool designed for individual work may undermine collaborative learning. Match the tool to the pedagogy, not the other way around.

4. Who does this tool work for — and who does it exclude?

Does it require paid access? Does it work on the devices your students have? Is student data protected? Note: HEPI data shows a clear digital divide — male, STEM, and socioeconomically advantaged students use AI more. These aren't afterthoughts. They're prerequisites.

5. Have you tried it yourself first?

Research from the Annenberg Institute found that educators who documented their AI experiments and reflected with colleagues made significantly faster progress toward genuine AI fluency. Abstract training produces almost no transfer. Applied exploration does.

Your Starting Point: One Tool, One Week

Don't try to master nine categories.

Choose one based on your most pressing classroom challenge:

| Your Challenge | Category | Tool to Try First |

|---|---|---|

| Drowning in lesson prep | Custom Education | MagicSchool AI (free tier) |

| Students need better research skills | Research & Study | NotebookLM (free) |

| Need engaging visuals without design skills | Image Creation | Canva AI (free for K-12 teachers) |

| Diverse reading levels in one class | Custom Education | Diffit (free tier) |

| Students need support outside class hours | AI Tutoring | Khanmigo (free for U.S. teachers) or SchoolAI (free globally) |

| Want AI-generated presentations fast | Presentation | Gamma (free tier) or Google Slides + Gemini |

| Need real-time classroom engagement | Interactive Tools | Edodo Quiz Game or Word Cloud (free, no login) |

| Want to differentiate any content instantly | General LLM | Claude, ChatGPT, or Gemini |

Give yourself one week with one tool.

Document what works and what doesn't.

Then bring that experience to a colleague.

That's how AI fluency actually builds — not through reading about tools, but through using them with intention and reflecting with rigour.

The Human Is Not Optional

Here's the most important sentence in this entire article:

No tool in any of these nine categories replaces what you bring to your classroom.

Stanford HAI research confirms it: "AI amplifies whatever educational foundation already exists."

In schools where teachers are connected, purposeful, and pedagogically confident — AI becomes fuel.

In schools where teaching is fragmented and reactive — AI accelerates that fragmentation.

86% of education organisations now use generative AI — the highest adoption rate of any industry. The global AI education market is projected to grow from $7.57 billion to over $112 billion by 2034. The tools are extraordinary and accelerating.

But you are still the point.

References:

- Bower, M., Torrington, J., & Lai, J.W.M. (2026). Typology of Generative AI Tools for Education. OSF Preprint

- Ma, Y., et al. (2025). A Meta-Analysis of the Impact of Generative AI on Learning Outcomes. Journal of Computer Assisted Learning. Read

- Kestin, G., Miller, K., et al. (2025). AI Tutoring Outperforms In-Class Active Learning. Scientific Reports (Nature). Read

- RAND Corporation (2025). AI Use in Schools Is Quickly Increasing but Guidance Lags Behind. Read

- HEPI/Kortext (2025). Student Generative AI Survey 2025. Read

- Brookings Global Task Force (2026). A New Direction for Students in an AI World. Read

- Brookings (2026). What the Research Shows About Generative AI in Tutoring. Read

- OECD (2026). Digital Education Outlook 2026. Read

- MIT Media Lab (2025). Your Brain on ChatGPT. Read

- Jose B., et al. (2025). The Cognitive Paradox of AI in Education. Frontiers in Psychology. Read

- SBS Swiss Business School (2025). From Offloading to Engagement: Structured Prompting Study. MDPI Data. Read

- Nature (2025). Systematic Review of AI-Driven ITS in K-12. npj Science of Learning. Read

- AI vs Human Feedback Meta-Analysis (2025). British Journal of Educational Psychology. Read

- NIST (2024). AI 600-1: Generative AI Risk Profile. Read

- UNESCO (2024). AI Competency Framework for Teachers. Read

- Stanford HAI — AI Challenges Core Assumptions in Education. Read

- Harvard GSE — AI Can Add, Not Just Subtract, From Learning. Read

- Annenberg Institute at Brown — AI in Professional Learning. Read

- Microsoft Education (2026). AI-Powered Teaching and Learning Innovations. Read