What Comes Next? — The Confirmation Bias Trap

At a Glance

- Time: 4-5 minutes

- Prep: None (whiteboard or any writing surface)

- Group: Whole class (collaborative guessing)

- Setting: In-person, hybrid, or online

- Subjects: Universal (especially effective for science, math, research methods, AI education)

- Energy: High (the room gets intensely competitive)

Purpose

Demonstrate confirmation bias in real time — the universal human tendency to only test evidence that CONFIRMS our theory, never evidence that would DISPROVE it. Based on Peter Wason's famous 2-4-6 Task (1960), this activity reliably catches every audience, from students to scientists to CEOs. It's the most direct connection to AI literacy: we ask AI questions that validate our existing beliefs, rather than testing whether AI's confident answers are actually wrong.

How It Works

Step-by-step instructions:

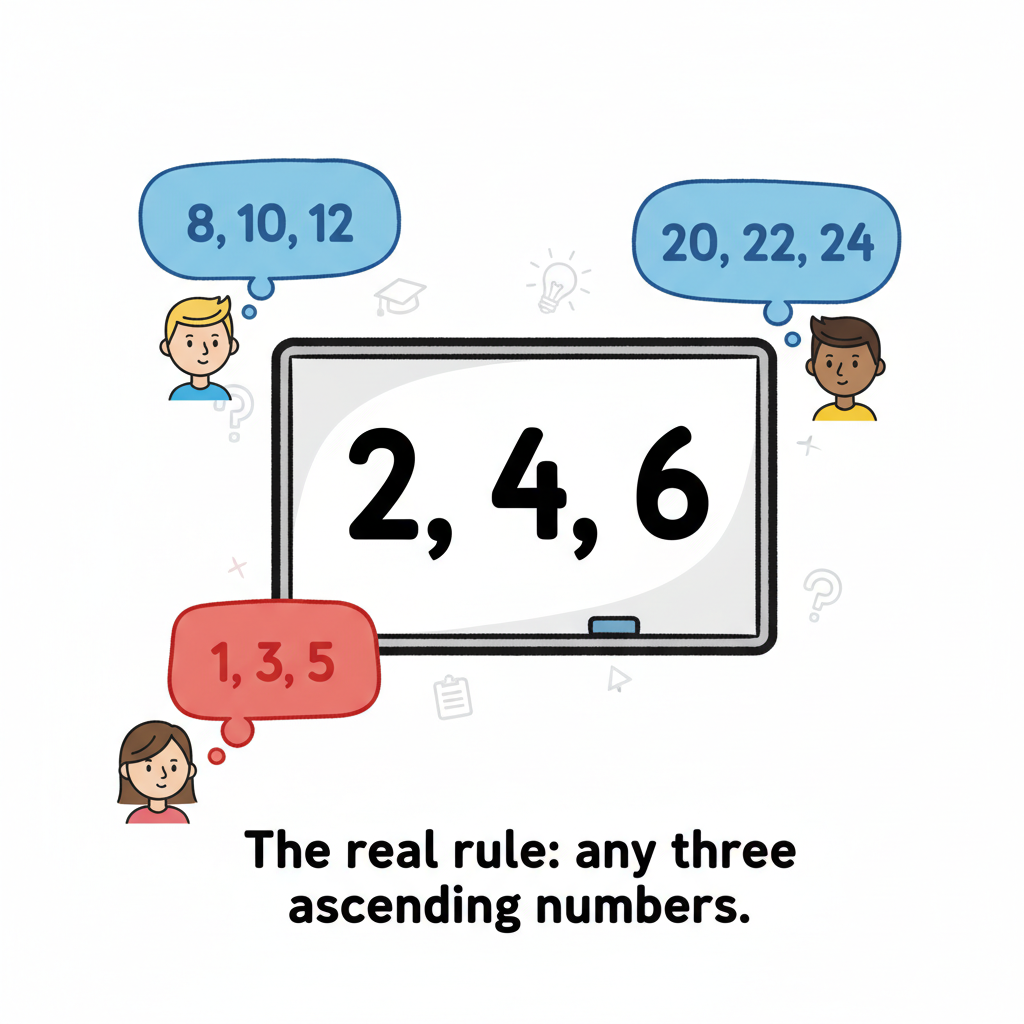

- SET UP (15 seconds) — Write on the board: 2, 4, 6. Say: "I have a rule in my mind. These three numbers follow my rule. Your job is to figure out my rule by suggesting your own sets of three numbers. For each set you suggest, I'll tell you whether it follows my rule — yes or no."

- ROUND 1 — CONFIRMING TESTS (60 seconds) — Students will test numbers that match their hypothesis:

- "8, 10, 12?" → "Yes, follows my rule."

- "20, 22, 24?" → "Yes."

- "100, 102, 104?" → "Yes." At this point, someone will confidently announce: "The rule is 'add 2' — or 'even numbers increasing by 2.'" You say: "No. That's not my rule."

- ROUND 2 — CONFUSION (60 seconds) — They'll adjust slightly but keep confirming:

- "3, 5, 7?" → "Yes." (This surprises them — odd numbers work!)

- "1, 3, 5?" → "Yes."

- They guess: "Numbers increasing by 2?" → "No."

- "Consecutive odd numbers?" → "No."

- ROUND 3 — THE BREAKTHROUGH (30 seconds) — Eventually someone tries a genuinely different test:

- "1, 5, 100?" → "Yes!" The room erupts in confusion.

- "1, 2, 3?" → "Yes."

- "10, 50, 999?" → "Yes."

- THE REVEAL (30 seconds) — Announce: "My rule was simply: any three numbers in ascending order." That's it. 1, 2, 3 works. 5, 99, 1000 works. Even 0.001, 0.002, 0.003 works. The rule was far simpler and broader than anyone imagined.

- THE LESSON (30 seconds) — "Every single one of you only tested numbers that CONFIRMED your theory. Nobody tried 6, 4, 2 to see if it would FAIL. Nobody tried 1, 1, 1 to see if it would fail. You only asked questions you expected to get 'yes' to. This is confirmation bias — and it's the #1 danger with AI."

What to Say

Opening: "I have a secret rule. These three numbers — 2, 4, 6 — follow my rule. Your job is to figure out what my rule is. You can test by giving me any three numbers, and I'll tell you yes or no. Who wants to go first?"

During confirming tests: Just say "Yes, that follows my rule" or "No, that doesn't follow my rule." Don't give hints. Don't smile knowingly. Be neutral.

After the wrong guess: "Interesting theory! But no, that's not my rule. Keep testing."

After the reveal: "Look at what happened. You started with a theory — 'add 2' — and then ONLY tested numbers that would confirm it. 8, 10, 12. 20, 22, 24. 100, 102, 104. Every test was designed to get a 'yes.' Nobody tested 1, 5, 100 or 3, 7, 2 early on. You never tried to BREAK your theory. You only tried to CONFIRM it."

AI connection: "This is exactly how we use AI. We ask questions we already know the answer to. We prompt AI with our existing belief and feel validated when it agrees. Real critical thinking — with AI or with data — means actively trying to disprove your hypothesis, not decorate it with confirmation."

Why It Works

Peter Wason designed the 2-4-6 Task in 1960 to study hypothesis testing. His finding was striking: people overwhelmingly use a positive test strategy — they only test instances they expect to be positive examples of their rule. They rarely test instances they expect to VIOLATE their rule, even though disconfirming evidence is far more informative.

This is confirmation bias in its purest form. The task is elegant because:

- The starting example (2, 4, 6) strongly suggests "increasing by 2"

- Every "add 2" test returns "yes," reinforcing the wrong hypothesis

- The actual rule ("ascending order") is much simpler and broader

- Only testing disconfirming instances reveals the true rule

The activity is universally effective because it catches EVERYONE — scientists, engineers, educators, students. Intelligence doesn't protect against confirmation bias. Only awareness and deliberate strategy do.

Research Citation: Wason, P.C. (1960). On the failure to eliminate hypotheses in a conceptual task. Quarterly Journal of Experimental Psychology, 12(3), 129-140.

Teacher Tip

Be a poker player. Don't telegraph the answer through your facial expressions. When they test "8, 10, 12" and you say "Yes," be neutral. When someone finally tests "1, 5, 100" and you say "Yes," be equally neutral. The moment they realize their entire strategy was flawed should come from their own reasoning, not from your reactions.

Variations

For Different Subjects

- Science: This IS the scientific method. "Hypothesis testing requires trying to FALSIFY your hypothesis, not just confirm it. Karl Popper called this 'falsifiability' — it's the cornerstone of science."

- Math: "In proofs, checking that your formula works for 5 examples doesn't prove it's correct. Finding ONE counterexample proves it's wrong. Which strategy is more powerful?"

- History: "When we study history with a thesis already in mind, we tend to collect only supporting evidence. What evidence did you IGNORE because it didn't fit your narrative?"

- AI Education: "Don't just ask AI to confirm what you believe. Ask it to argue AGAINST you. If you think climate change is human-caused, ask AI for the strongest counterarguments. If you think a certain policy is good, ask AI for its weaknesses. This is how you actually THINK, not just validate."

- Research Methods: "This is why null hypothesis testing matters. This is why peer review exists. This is why replication studies are essential. Every safeguard in science exists because of confirmation bias."

For Different Settings

- Large Audience (50+): Have them shout out suggestions. Take 3-4 from different parts of the room each round.

- Small Class (5-15): Go around the room — each student gives one set of three numbers. More individual involvement.

- Workshop/PD: After the reveal, have groups design their own "2-4-6 style" puzzles for their subject area and test them on other groups.

For Different Ages

- Elementary (K-5): Simplify: "I'm thinking of a rule about shapes. This triangle follows my rule. Does this square follow my rule? (Yes.) Does this circle? (Yes.) What's my rule?" (Rule: any shape.) They'll guess "shapes with straight sides" and be surprised when circles work too.

- Middle/High School (6-12): Full 2-4-6 version works perfectly.

- College/Adult: Full version with deeper debrief on Wason, Popper, and falsifiability. Connect to professional decision-making: "When was the last time you tried to disprove your own idea at work?"

Online Adaptation

Tools Needed: Chat or shared document

Setup: Type "2, 4, 6" in the chat or on a shared screen.

Instructions:

- "Type your three numbers in the chat. I'll reply Yes or No."

- Process suggestions one at a time. Type your response publicly.

- After 4-5 confirmations, ask: "Who thinks they know the rule? DM me your guess."

- Respond privately: "No, that's not the rule. Keep testing."

- Continue until someone tries a genuinely different set.

Pro Tip: The chat log creates a perfect record of the confirmation bias in action. After the reveal, scroll back and ask: "Look at the first 10 suggestions. How many were designed to confirm vs. to disprove?" The answer is almost always 10-0.

Troubleshooting

Challenge: Someone guesses "ascending order" on the first try. Solution: Rare, but if it happens, ask HOW they knew. "What test did you run that eliminated 'add 2'?" Usually they guessed — which is not the same as testing. If they actually tested disconfirming evidence first (e.g., tried "1, 5, 100" early), celebrate them: "You did what almost nobody does — you tried to BREAK your theory instead of confirm it."

Challenge: The activity runs long because nobody tries disconfirming tests. Solution: After 3-4 confirming rounds, say: "I notice every test so far has been numbers going up by 2. What if you tried something COMPLETELY different?" This hint preserves the lesson while preventing the activity from dragging.

Challenge: Students feel embarrassed about being wrong. Solution: Emphasize universality: "This isn't about intelligence. Peter Wason tested Cambridge University students — the same thing happened. Confirmation bias affects everyone. The difference is whether you're AWARE of it."

Extension Ideas

- Deepen: Have students apply the lesson immediately. Give them a claim: "Students learn better with music playing." Ask them to generate 5 test questions — they'll default to confirming questions. Then challenge them: "Now generate 5 questions designed to DISPROVE this claim." Compare the two sets.

- Connect: For one week, keep a "confirmation bias journal." Every time you search for information that supports what you already believe — online, in conversation, or using AI — note it. At the end of the week, review: "How often did I try to disprove my own beliefs?"

- Follow-up: Introduce the practice of "steelmanning" — articulating the strongest version of an argument you DISAGREE with. This is the intellectual equivalent of testing "1, 5, 100" when you think the rule is "add 2."

Related Activities: The X Activity, How Many Squares, Invisible Gorilla